The conversation about artificial intelligence in the workplace has moved through several phases: the breathless predictions, the cautious pilot programs, and now the more grounded question of what it actually takes for an organization to benefit from AI-augmented work in practice rather than in theory. The organizations that are capturing genuine productivity gains from AI tools are not the ones that have signed up for the most AI subscriptions or announced the most ambitious AI transformation programs. They are the ones that had already built an operational infrastructure capable of absorbing AI’s outputs and acting on them efficiently. AI that generates a strategy document is only as useful as the organizational system that can review, approve, and implement the strategy in a fraction of the time it would have taken without AI. AI that summarizes a meeting is only as useful as the workflow that turns the summary into actions and tracks those actions to completion. AI that drafts communication is only as useful as the approval and delivery system that can get it to the right audience within the relevant window. The infrastructure readiness question is not about whether your team is using AI. It is about whether your project management tools are designed to make the most of what AI produces once it has produced it.

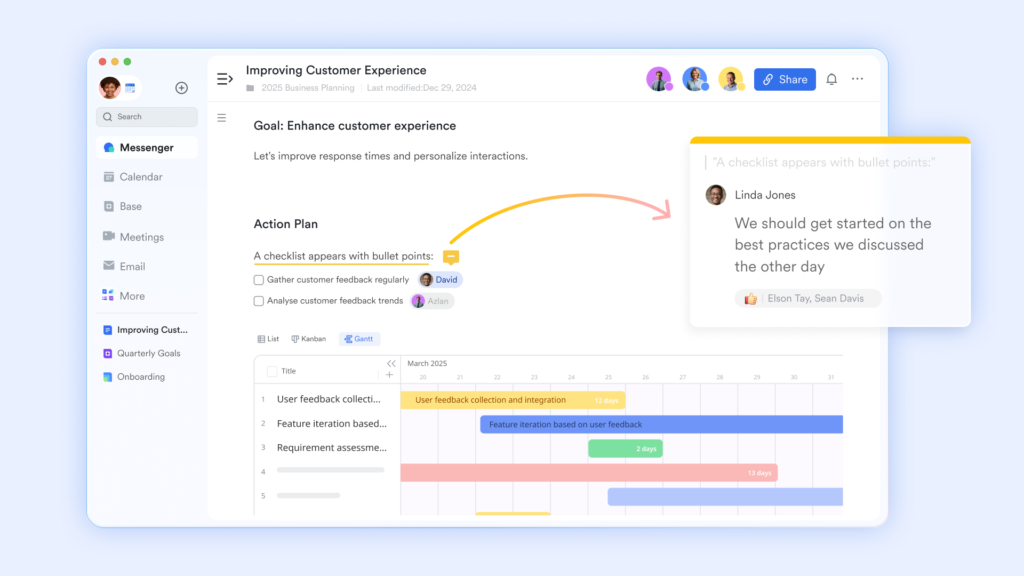

A knowledge base that AI outputs can find and be found in with Lark Docs

AI that generates content is generating content that will be used, refined, and built upon by human collaborators. The value of that content depends entirely on whether it can be found by the right people at the right time, whether it can be edited and improved without losing track of the human changes made on top of the AI foundation, and whether it can be connected to the action layer where its outputs translate into organizational results.

- Real-time co-editing allows human collaborators to work simultaneously on AI-generated drafts, so the refinement of AI output is as fast as the human collaboration infrastructure supporting it rather than being slowed by sequential review rounds.

- “Version History” creates a permanent record of how an AI-generated document evolved through human review and refinement, so the contribution of the human editors is as traceable as the AI’s original output and the two can be distinguished when it matters.

- “@mention” within documents allows the human review of AI-generated content to generate action assignments at the point of review, so the gap between an AI output and the human actions it is supposed to generate close to the minimum technically possible rather than requiring a separate action assignment step after the review is complete.

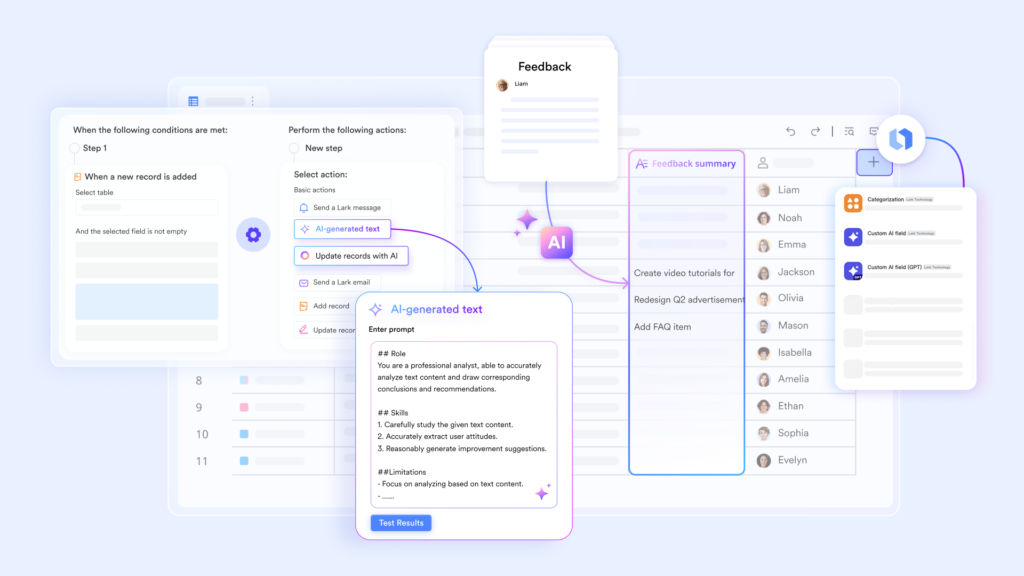

Operational data that AI analysis can be built on with Lark Base

AI analysis is only as good as the data it analyzes, and organizational data that lives in disconnected, inconsistently formatted, partially updated spreadsheets is data that produces AI analysis of limited reliability. The organization whose operational data is live, structured, and consistently maintained in a relational database is the organization whose AI analysis is worth acting on.

- Shared real-time dashboards ensure that the operational data available for AI analysis reflects the current state of the organization’s work rather than the state it was in when the last manual update was made, so the insights generated from that data are based on current reality rather than recent history.

- Automation workflows maintain the data hygiene that AI analysis requires by ensuring that records are updated when they should be updated, fields are populated when they should be populated, and exceptions are flagged when they occur, without requiring manual maintenance that human inconsistency makes unreliable at scale.

- “Granular permission by row and column” allows different categories of operational data to be accessible for AI analysis while protecting the sensitive data whose inclusion in AI analysis would create privacy or compliance concerns, so the AI operates on the data it should have access to rather than all available data.

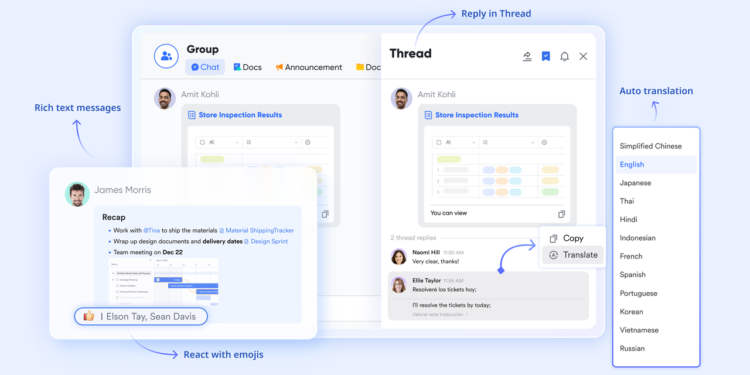

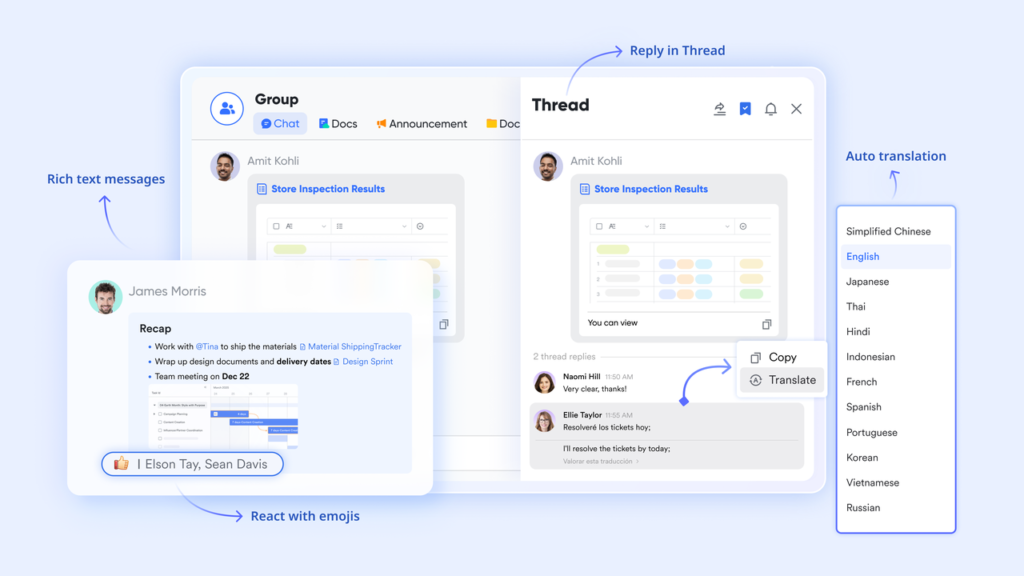

Communication infrastructure that AI meeting intelligence can flow into with Lark Messenger

AI meeting transcription and summarization tools are most useful when their outputs flow directly into the communication channels where the teams who need to act on them are already working, rather than being delivered to a separate system that someone has to remember to check. The communication infrastructure that is designed to receive, distribute, and act on AI-generated meeting intelligence multiplies the value of the AI tool many times over.

- “Chat Tabs & Threads” in meeting-linked groups provide the structured communication channel where AI-generated meeting summaries, action items, and decision records can be shared with the full meeting group immediately after the session ends, so the outputs of the AI meeting tool arrive in the communication environment where the team is already working.

- “Scheduled Messages” allow AI-generated briefings, summaries, and updates to be scheduled for delivery at the optimal moment for each recipient’s working pattern rather than arriving in real time in a way that may interrupt focused work.

- “Read/Unread Status” gives the sender of AI-generated summaries and action assignments confirmation that every recipient has seen the output, so accountability for acting on AI-generated insights is maintained by the communication infrastructure rather than by individual follow-up.

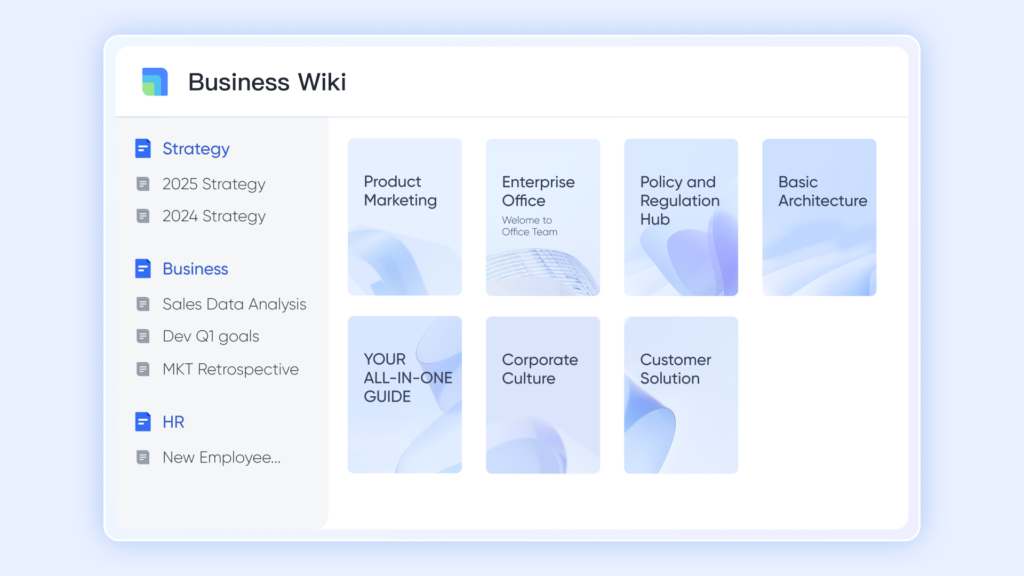

Approval workflows that handle AI-generated outputs at AI speed with Lark Wiki

Organizations that use AI to generate strategic documents, communications, and reports need an approval infrastructure that can review and authorize those outputs at a pace that captures the speed advantage that AI was supposed to provide. An AI that produces client communication in five minutes and then waits three days for an approval cycle has not delivered a competitive advantage.

- “Advanced Search” allows compliance and approval teams to quickly retrieve the regulatory guidance, precedent decisions, and policy documentation relevant to reviewing an AI-generated output, so the human review of AI content is supported by instant access to organizational knowledge that informs the review rather than requiring the reviewer to locate that knowledge before the review can begin.

- “Permission Settings” allow different categories of AI-generated output to be reviewed by the appropriate authority with access to the appropriate contextual knowledge, so the review process is as calibrated to the AI output’s specific characteristics as the AI tool itself is calibrated to the organization’s specific requirements.

- “Rich Content” pages can carry the full context for any category of AI-assisted decision, including the guidelines the AI was operating within, the human approval decisions that were made on its outputs, and the outcomes of those decisions, in a single organized reference that allows the organization to learn from its AI-assisted decisions over time.

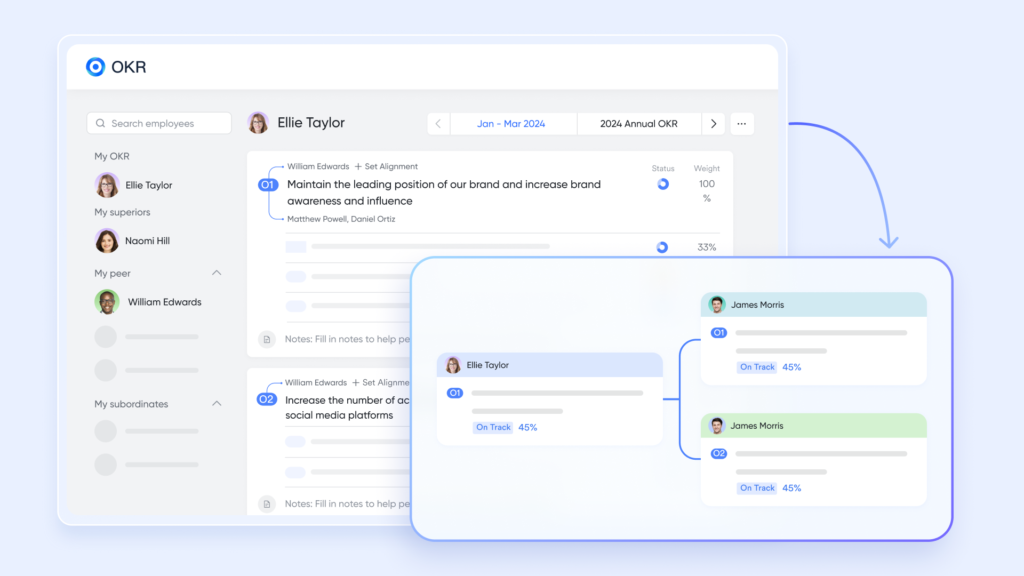

OKR alignment that keeps AI-assisted work strategically directed with Lark OKR

AI tools that are available to every team member without strategic direction produce AI-assisted work that is as misaligned with organizational priorities as the un-assisted work it replaces. The organization that gives every team member access to a powerful AI tool without giving every team member clarity about which objectives that AI tool’s outputs should be serving has invested in a capability without investing in the direction that would make that capability valuable.

- Company-wide objective visibility gives every team member using AI tools the strategic context to direct those tools toward the work that creates the most organizational value, rather than directing them toward the work that is most interesting or most convenient.

- Individual key results connected to team objectives create the personal accountability structure that ensures AI-assisted productivity is directed toward organizational goals rather than personal optimization, so the hours recovered by AI efficiency gains are invested in the highest-priority work rather than dispersed across lower-priority activities.

- Real-time key result progress visible to every team member provides continuous feedback that helps teams using AI tools evaluate whether the efficiency gains they are capturing are translating into the strategic outcomes they were supposed to accelerate.

Bonus: Why AI investments underperform without the right operational foundation

Most AI investments underperform their projected returns not because the AI tools are inadequate but because the operational infrastructure that was supposed to absorb and act on their outputs was not designed for the pace at which those outputs can be generated. A team uses AI to generate strategy documents but reviewing them in a sequential email chain generates AI-speed content and acts on it at pre-AI speed. The bottleneck has moved from content generation to content review and action.

Tools like Notion AI and Microsoft Copilot improve the content generation layer. Zapier and Make improve the workflow automation layer. But neither addresses the full operational infrastructure, the collaborative review, the approval routing, the action assignment, the knowledge management, and the strategic alignment, that determines whether AI-generated outputs translate into organizational value. Teams evaluating Google Workspace pricing often find that collaboration tools alone are not enough when AI adoption increases output volume. Many add separate AI tools that generate content or insights faster than surrounding workflows can review, approve, and act on them. Lark provides the operational infrastructure to manage that output, routing work through review, assigning follow-up actions, and tracking execution in one environment that can better support AI-assisted workflows.

Conclusion

The question of AI readiness is not whether your team is using AI tools. It is whether your operational infrastructure is designed to capture the value of what those tools produce. A connected set of productivity tools that enables real-time collaborative review of AI outputs, maintains the data quality that AI analysis requires, routes AI-generated content through approval at appropriate speed, surfaces the organizational knowledge that AI review requires, and keeps AI-assisted effort directed toward organizational priorities is how organizations translate AI investment into organizational performance rather than into impressive demonstrations that do not compound into competitive advantage.